Online Open Probability School (OOPS)

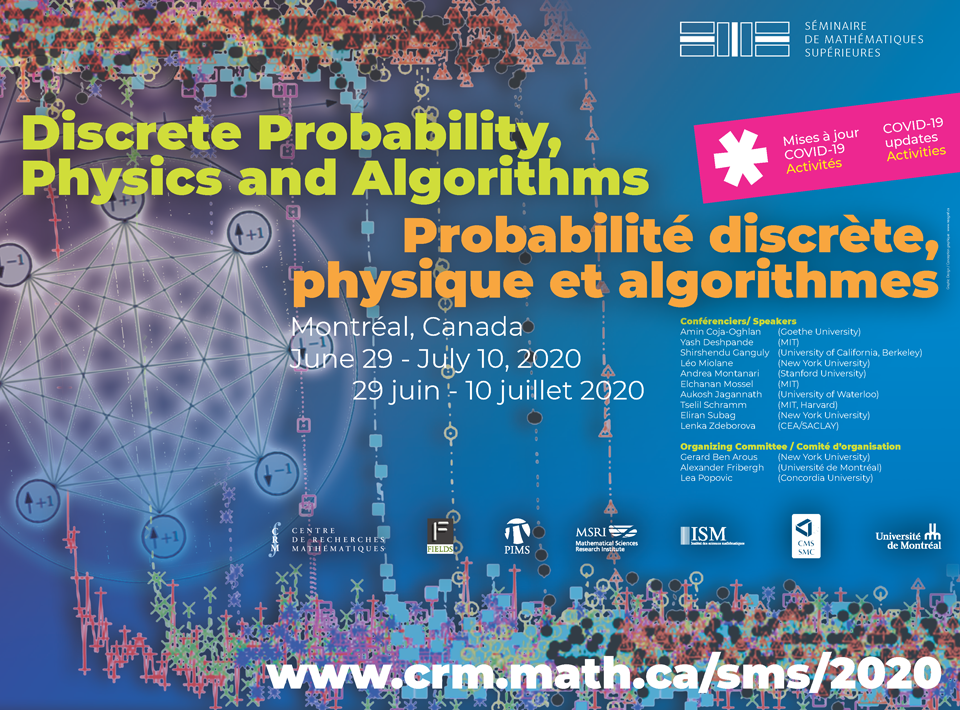

In view of the current situation, PIMS-CRM Summer School in Probability and the Summer School 2020 Séminaire de mathématiques supérieures (SMS) on Discrete Probability, Physics and Algorithms have united to run a series of mini-courses in probability this Summer: OOPS! (Online Open Probability School).

To register: https://mailman-mail5.webfaction.com/listinfo/oops

SMS 2020 - Overview

Probability theory, statistics as well as mathematical physics have increasingly been used in computer science. The goal of this school is to provide a unique opportunity for graduate students and young researchers to developed multi-disciplinary skills in a rapidly evolving area of mathematics.

The topics would include spin glasses, constraint satisfiability, randomized algorithms, Monte-Carlo Markov chains and high-dimensional statistics, sparse and random graphs, computational complexity, estimation and approximation algorithms. Those topics will fall into two main categories, on the one hand problems related to spin glasses and on the other hand random algorithms.

The part of the summer school dedicated to spin glasses will be split into three parts: an introductory course about traditional spin glasses followed by two more advanced courses where spin glasses meet computer science in addition to a talk on dynamics of spin glasses. The part of the summer school on random algorithms will consist of an introductory course on phase transitions in large random structures, followed by advanced courses on theoretical bounds for computational complexity in reconstruction and inference, and on understanding rare events in random graphs and models of statistical mechanics.

The two introductory courses on spin glasses and on random algorithms will be accompanied by three exercises sessions of one hour. A one hour exercises session will follow each of the three sessions of a course for both the introductory course on spin glasses and the introductory course on random algorithms. Exercises sessions will be led by an assistant, but will primarily focus on participation of the students.

Suggested Prerequisites

The students are expected to have previously learned the theory of random variables, law of large numbers, central limit theorem, discrete time Markov chains. The two main references for this content includes: A first course in probability by Ross (Chapters 1-8) and Markov chains by Norris (or http://www.statslab.cam.ac.uk/~rrw1/markov/M.pdf Chapter 1-10).

We also recommend Probability and Random processes by Grimmett and Stirzaker. This excellent book will cover all the important material (Chapter 1-6) in Ross and Norris. It will also provide a deeper introduction to two central tools: Gaussian vectors (Chapter 4.9) and large deviations (Chapter 5.11). These topics are also covered in Probability: theory and examples by Durrett.

We also recommend https://www.probabilitycourse.com/ by Pishro-Nik which contains an introduction to the terminology used in statistics (Chapter 8-9). Chapters 1-7 can also be used as an introduction to probability theory.

The students should also have basic notions on Markov Chain Monte Carlo and Markov chain mixing. For this, we recommend the first five chapters of Markov chains and mixing times by Levin, Peres and Wilmer available at https://pages.uoregon.edu/dlevin/MARKOV/markovmixing.pdf.

Finally, the students who are less familiar with physics terminology (such as phase transitions) or information theory (such as entropy) should look at Information physics and computation by Mezard and Montanari. We want to emphasize that this book may heavily intersect with the course given by Andrea Montanari.

For eligibility and how to apply, see the Summer Graduate Schools homepage

Due to the small number of students supported by MSRI, only one student per institution will be funded by MSRI. This school may require an additional application with SMS directly.